Amazon Rekognition is a service offered by Amazon Web Services that makes it easy to add powerful visual analysis capabilities to your applications. It is a deep learning powered, image & video recognition engine that detects faces, objects, and scenes. It also possesses intelligent capabilities such as extracting text, recognizing celebrities and identifying inappropriate content from images and videos.

This leads us to some interesting propositions. We can think of several use cases such as

- Attendance Tracking - By using Amazon Rekognition, school administration can automate the attendance process by taking a face grab of the students when they enter the premises.

- Law Enforcement - It is possible to identify stolen vehicles or unattended baggage based on Amazon Rekognition’s capability to identify text in an image.

- Natural Disaster: During natural disasters, satellite images can be analyzed to search for humans and animals who are under critical condition, Amazon Rekognition’s object and scene detection feature plays an important role for enabling rescue operations based on these images.

- Authentication - Your face is your password. You can log in to an application using your face and Amazon Rekognition verifies against your stored face images.

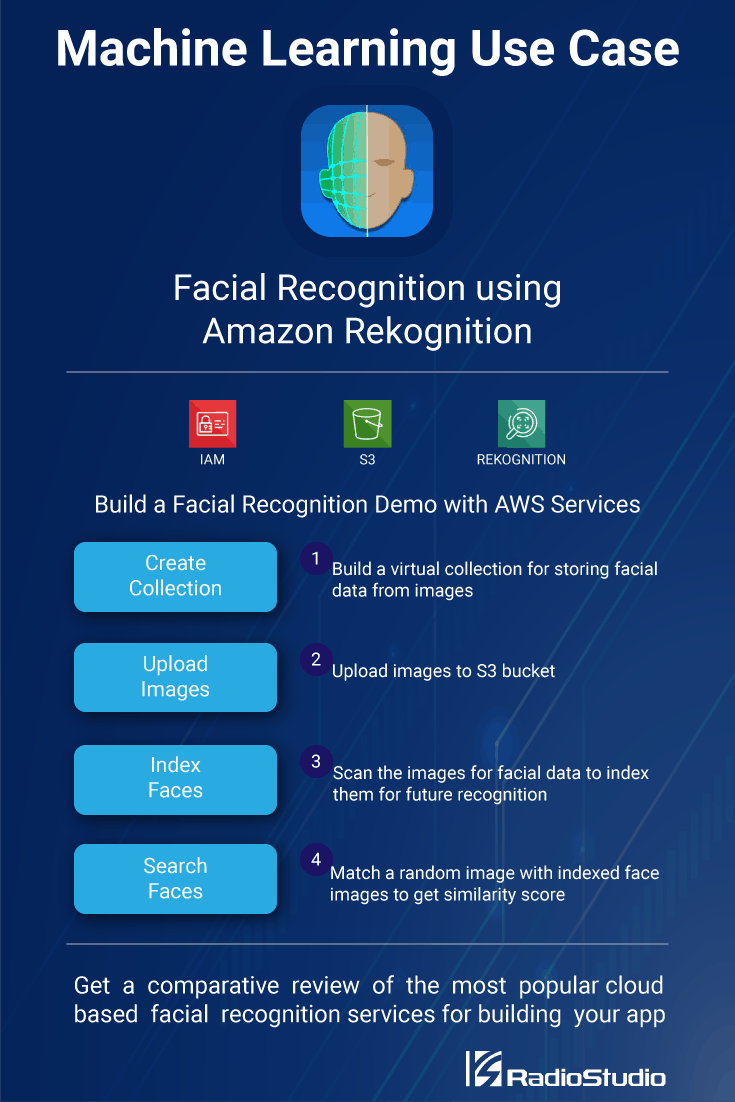

Face recognition and facial analysis are one of the major requirements in many of these use cases. In this blog post, we will explore how to leverage the various AWS services for performing face recognition, and build a small demo application using Amazon Rekognition. This demo application is built using Python and the boto3 SDK from AWS.

If you are keen to explore cloud based facial recognition services for your upcoming project then here is a brief comparison of the popular facial recognition services.

Recognizing Faces Using the AWS Services

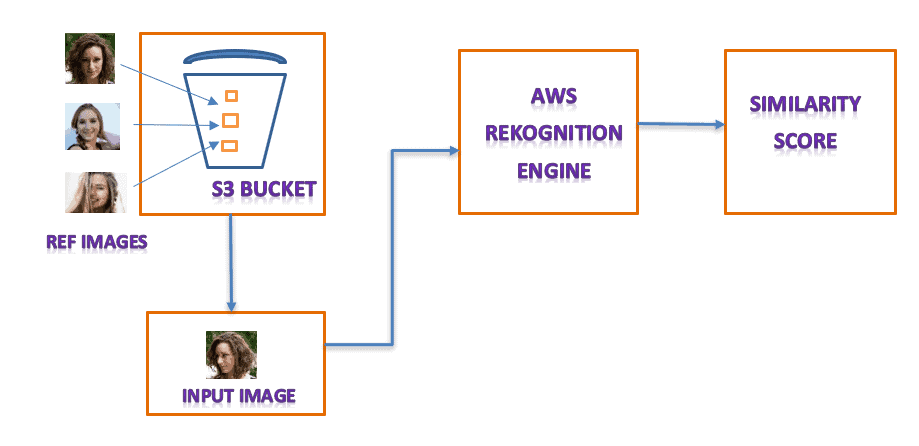

Face recognition is about comparing a face image with stored reference images. In the case of AWS, S3 is the storage service for storing reference face images. Together with Amazon Rekognition service, we can leverage S3’s storage features to build a face recognition application.

Amazon Rekognition uses a virtual container to store and index images. This is known as a collection. Collections can be created or deleted dynamically and they act as a repository of images for a single application. Images are loaded onto the collections from S3 buckets and then run through Amazon Rekognition engine.

The results of a facial recognition match are always returned as a similarity score in percentage (%) between the input image with the stored reference images. This is similar to the probability score and is a good indication for deciding whether a given face input image matches one of the reference images or not.

Test Driving the Amazon Rekognition Service

Let’s put Amazon Rekognition on a short test drive. We will use a few reference images belonging to two persons and observe the similarity score returned by Rekognition for different scenarios.

Here are the reference images for the two persons named Mary and Alice

Person 1 (Mary)

Person 2 (Alice)

To verify the similarity score returned by Rekognition, we will test for three scenarios.

Scenario 1 - Using an input image not belonging to any of the persons whose reference images are in the collection.

Scenario 2 - Using an input image that belongs to the person whose reference images are in the collection.

Scenario 3 - Using an input image that exactly matches with one of the person’s reference images in the collection.

Follow the steps below to set up the test environment for Amazon Rekognition.

Before you begin

Make sure that you have an AWS account and have full IAM access to the S3 and Rekognition service.

Make sure that you have access to your IAM access key and secret access key.

Download the application package containing the demo program and reference & input images.

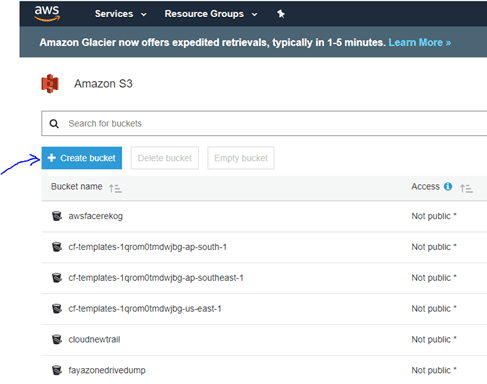

Step 1: Create a new S3 bucket.

First, you need to create a storage repository to host the reference images. This involves setting up an S3 service.

Follow the standard steps in AWS console to create an S3 bucket named “aws-face-rek” under "ap-south-1" region.

Step 2: Install the boto3 library

The demo program is written in Python3. Make sure that you have a working installation of the Python 3 run time and then install the boto3 SDK.

pip install boto3

Step 3: Update your AWS credentials in demo code

Edit the demo python program (facerek.py) and update your AWS credentials for access key and secret access keys. You can find the placeholders for access and secret key defined as <YOUR_ACCESS_KEY> & <YOUR_SECRET_KEY> . The AWS region is set to ap-south-1 (Mumbai) but you can change that to suit your choice too.

Note: You must ensure that the credentials belong to an IAM user who has full access to S3 and Rekognition services.

The Most Popular Facial Recognition Services Compared

Take a look at the six other popular cloud based facial recognition services and know how they match up against Amazon Rekognition.

Remarks:

Facial Recognition Demo

The demo python program will run based on command line options. There are four options available for the users.

- Creating Collection (1) - Creation of a collection is the first step in operating the Rekognition service. Since all images will be indexed within a collection, the program allows user to create a collection.

- Deleting Collection (2) - Users can delete the existing collection and to erase all indexed data

- Index Face (3) - This operation registers a new face. The Rekognition service will accept a valid face image and return an Face Id.

- Match Face (4) - This is the actual facial recognition operation. An input image is matched with all the indexed images and the program returns the similarity score with the best match.

To perform a conclusive test of the facial recognition capabilities, an additional test image is provided for alice and mary which will be used as input.

Running the demo program with the test images will provide a clear picture of how the application works. Before you run the demo program you must upload all the face images of ref_images directory in the S3 bucket.

Creating Collection

The first thing that needs to be done is the creation of a collection.

Indexing Face

You can now index face images. Here is how you will do it for alice-1.jpg.

Similarly you can add alice-2.jpg and alice-3.jpg Also to test the system with multiple persons, you must add mary-1.jpg, mary-2.jpg and mary-3.jpg. With this you have indexed three reference images for Alice and Mary.

Note: Remember that third argument refers to the images in the S3 bucket and not the local filesystem.

Matching Face

You can use alice-4.jpg and mary-4.jpg to match with the indexed images. The matching operation returns a similarity score which will be quite high as the image contains a known face. This is scenario 2 in action.

You can try scenario 3 by using alice-1.jpg as the image. Since this is the exact image which is already indexed, you will most likely get a 100% similarity score.

For testing scenario 1 you can use scarlett-1.jpg which is a different person whose face is not indexed. In this case you are likely to get no response.

Amazon Rekognition supports a threshold value of similarity score for returning matched faces. This demo program uses a threshold of 80%. However, by using a lower threshold you can match faces with dissimilar persons as well. This threshold needs to be tuned as per the application requirements

Deleting Collection

And finally, you can delete the collection, which in turn will clear away all the indexed face images and data.

Final Analysis

In our analysis, Amazon Rekognition has turned out to be a good tool for use cases which need facial recognition. It is fast and quite accurate even for medium quality snaps taken from a phone camera at inferior lighting conditions..

Be sure to check out the limits on Amazon Rekognition usage, in case you are using it for a very large application that needs to store a lots of images. With collections, you can have a smarter way of arranging images and avoid the problems of false recognition.

Looking for the Best Machine Learning API for Building Your Next App ?

Get a Concise Technology Research Report Based On Your Requirements

You an also check out our other demos on machine learning and image recognition for food quality inspection and retail store replenishment.

Nice article